Peer Recognized

Make a name in academia

How to Write a Research Paper: the LEAP approach (+cheat sheet)

In this article I will show you how to write a research paper using the four LEAP writing steps. The LEAP academic writing approach is a step-by-step method for turning research results into a published paper .

The LEAP writing approach has been the cornerstone of the 70 + research papers that I have authored and the 3700+ citations these paper have accumulated within 9 years since the completion of my PhD. I hope the LEAP approach will help you just as much as it has helped me to make an real, tangible impact with my research.

What is the LEAP research paper writing approach?

I designed the LEAP writing approach not only for merely writing the papers. My goal with the writing system was to show young scientists how to first think about research results and then how to efficiently write each section of the research paper.

In other words, you will see how to write a research paper by first analyzing the results and then building a logical, persuasive arguments. In this way, instead of being afraid of writing research paper, you will be able to rely on the paper writing process to help you with what is the most demanding task in getting published – thinking.

The four research paper writing steps according to the LEAP approach:

I will show each of these steps in detail. And you will be able to download the LEAP cheat sheet for using with every paper you write.

But before I tell you how to efficiently write a research paper, I want to show you what is the problem with the way scientists typically write a research paper and why the LEAP approach is more efficient.

How scientists typically write a research paper (and why it isn’t efficient)

Writing a research paper can be tough, especially for a young scientist. Your reasoning needs to be persuasive and thorough enough to convince readers of your arguments. The description has to be derived from research evidence, from prior art, and from your own judgment. This is a tough feat to accomplish.

The figure below shows the sequence of the different parts of a typical research paper. Depending on the scientific journal, some sections might be merged or nonexistent, but the general outline of a research paper will remain very similar.

Here is the problem: Most people make the mistake of writing in this same sequence.

While the structure of scientific articles is designed to help the reader follow the research, it does little to help the scientist write the paper. This is because the layout of research articles starts with the broad (introduction) and narrows down to the specifics (results). See in the figure below how the research paper is structured in terms of the breath of information that each section entails.

How to write a research paper according to the LEAP approach

For a scientist, it is much easier to start writing a research paper with laying out the facts in the narrow sections (i.e. results), step back to describe them (i.e. write the discussion), and step back again to explain the broader picture in the introduction.

For example, it might feel intimidating to start writing a research paper by explaining your research’s global significance in the introduction, while it is easy to plot the figures in the results. When plotting the results, there is not much room for wiggle: the results are what they are.

Starting to write a research papers from the results is also more fun because you finally get to see and understand the complete picture of the research that you have worked on.

Most importantly, following the LEAP approach will help you first make sense of the results yourself and then clearly communicate them to the readers. That is because the sequence of writing allows you to slowly understand the meaning of the results and then develop arguments for presenting to your readers.

I have personally been able to write and submit a research article in three short days using this method.

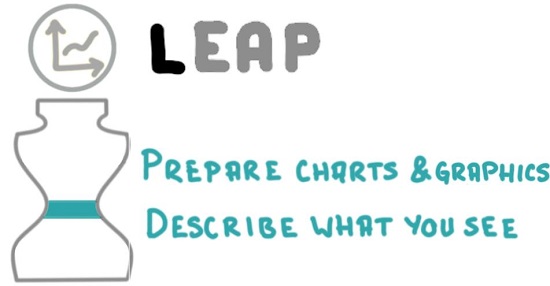

Step 1: Lay Out the Facts

You have worked long hours on a research project that has produced results and are no doubt curious to determine what they exactly mean. There is no better way to do this than by preparing figures, graphics and tables. This is what the first LEAP step is focused on – diving into the results.

How to p repare charts and tables for a research paper

Your first task is to try out different ways of visually demonstrating the research results. In many fields, the central items of a journal paper will be charts that are based on the data generated during research. In other fields, these might be conceptual diagrams, microscopy images, schematics and a number of other types of scientific graphics which should visually communicate the research study and its results to the readers. If you have reasonably small number of data points, data tables might be useful as well.

Tips for preparing charts and tables

- Try multiple chart types but in the finished paper only use the one that best conveys the message you want to present to the readers

- Follow the eight chart design progressions for selecting and refining a data chart for your paper: https://peerrecognized.com/chart-progressions

- Prepare scientific graphics and visualizations for your paper using the scientific graphic design cheat sheet: https://peerrecognized.com/tools-for-creating-scientific-illustrations/

How to describe the results of your research

Now that you have your data charts, graphics and tables laid out in front of you – describe what you see in them. Seek to answer the question: What have I found? Your statements should progress in a logical sequence and be backed by the visual information. Since, at this point, you are simply explaining what everyone should be able to see for themselves, you can use a declarative tone: The figure X demonstrates that…

Tips for describing the research results :

- Answer the question: “ What have I found? “

- Use declarative tone since you are simply describing observations

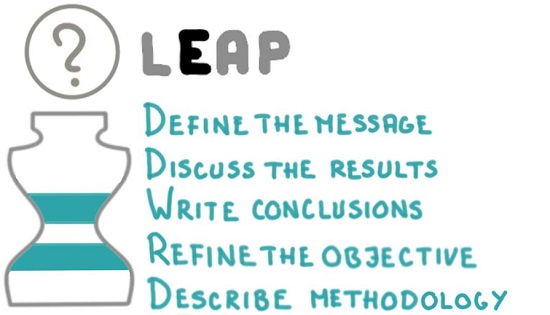

Step 2: Explain the results

The core aspect of your research paper is not actually the results; it is the explanation of their meaning. In the second LEAP step, you will do some heavy lifting by guiding the readers through the results using logic backed by previous scientific research.

How to define the Message of a research paper

To define the central message of your research paper, imagine how you would explain your research to a colleague in 20 seconds . If you succeed in effectively communicating your paper’s message, a reader should be able to recount your findings in a similarly concise way even a year after reading it. This clarity will increase the chances that someone uses the knowledge you generated, which in turn raises the likelihood of citations to your research paper.

Tips for defining the paper’s central message :

- Write the paper’s core message in a single sentence or two bullet points

- Write the core message in the header of the research paper manuscript

How to write the Discussion section of a research paper

In the discussion section you have to demonstrate why your research paper is worthy of publishing. In other words, you must now answer the all-important So what? question . How well you do so will ultimately define the success of your research paper.

Here are three steps to get started with writing the discussion section:

- Write bullet points of the things that convey the central message of the research article (these may evolve into subheadings later on).

- Make a list with the arguments or observations that support each idea.

- Finally, expand on each point to make full sentences and paragraphs.

Tips for writing the discussion section:

- What is the meaning of the results?

- Was the hypothesis confirmed?

- Write bullet points that support the core message

- List logical arguments for each bullet point, group them into sections

- Instead of repeating research timeline, use a presentation sequence that best supports your logic

- Convert arguments to full paragraphs; be confident but do not overhype

- Refer to both supportive and contradicting research papers for maximum credibility

How to write the Conclusions of a research paper

Since some readers might just skim through your research paper and turn directly to the conclusions, it is a good idea to make conclusion a standalone piece. In the first few sentences of the conclusions, briefly summarize the methodology and try to avoid using abbreviations (if you do, explain what they mean).

After this introduction, summarize the findings from the discussion section. Either paragraph style or bullet-point style conclusions can be used. I prefer the bullet-point style because it clearly separates the different conclusions and provides an easy-to-digest overview for the casual browser. It also forces me to be more succinct.

Tips for writing the conclusion section :

- Summarize the key findings, starting with the most important one

- Make conclusions standalone (short summary, avoid abbreviations)

- Add an optional take-home message and suggest future research in the last paragraph

How to refine the Objective of a research paper

The objective is a short, clear statement defining the paper’s research goals. It can be included either in the final paragraph of the introduction, or as a separate subsection after the introduction. Avoid writing long paragraphs with in-depth reasoning, references, and explanation of methodology since these belong in other sections. The paper’s objective can often be written in a single crisp sentence.

Tips for writing the objective section :

- The objective should ask the question that is answered by the central message of the research paper

- The research objective should be clear long before writing a paper. At this point, you are simply refining it to make sure it is addressed in the body of the paper.

How to write the Methodology section of your research paper

When writing the methodology section, aim for a depth of explanation that will allow readers to reproduce the study . This means that if you are using a novel method, you will have to describe it thoroughly. If, on the other hand, you applied a standardized method, or used an approach from another paper, it will be enough to briefly describe it with reference to the detailed original source.

Remember to also detail the research population, mention how you ensured representative sampling, and elaborate on what statistical methods you used to analyze the results.

Tips for writing the methodology section :

- Include enough detail to allow reproducing the research

- Provide references if the methods are known

- Create a methodology flow chart to add clarity

- Describe the research population, sampling methodology, statistical methods for result analysis

- Describe what methodology, test methods, materials, and sample groups were used in the research.

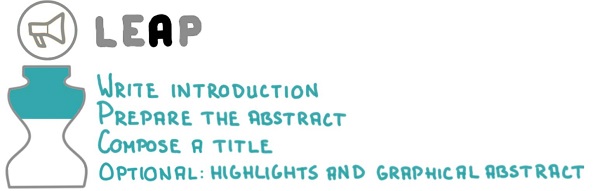

Step 3: Advertize the research

Step 3 of the LEAP writing approach is designed to entice the casual browser into reading your research paper. This advertising can be done with an informative title, an intriguing abstract, as well as a thorough explanation of the underlying need for doing the research within the introduction.

How to write the Introduction of a research paper

The introduction section should leave no doubt in the mind of the reader that what you are doing is important and that this work could push scientific knowledge forward. To do this convincingly, you will need to have a good knowledge of what is state-of-the-art in your field. You also need be able to see the bigger picture in order to demonstrate the potential impacts of your research work.

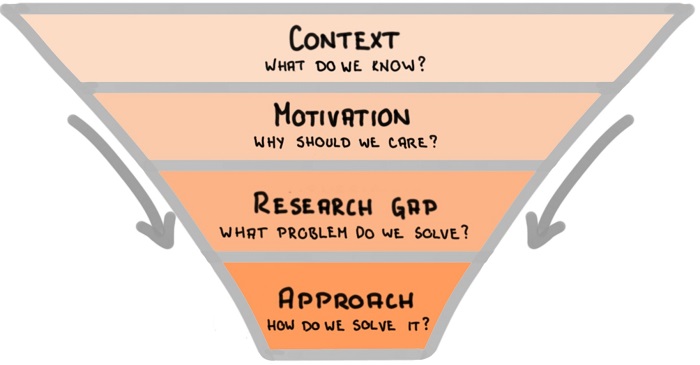

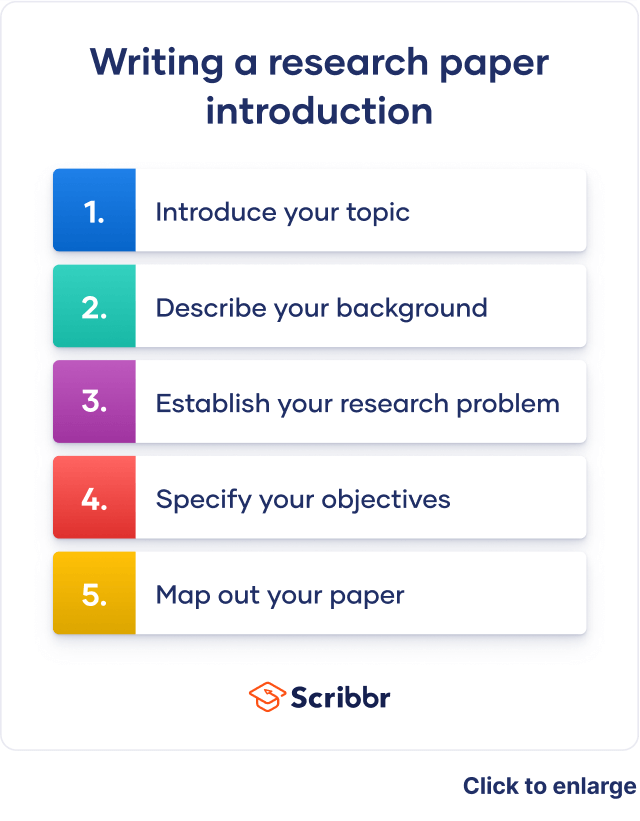

Think of the introduction as a funnel, going from wide to narrow, as shown in the figure below:

- Start with a brief context to explain what do we already know,

- Follow with the motivation for the research study and explain why should we care about it,

- Explain the research gap you are going to bridge within this research paper,

- Describe the approach you will take to solve the problem.

Tips for writing the introduction section :

- Follow the Context – Motivation – Research gap – Approach funnel for writing the introduction

- Explain how others tried and how you plan to solve the research problem

- Do a thorough literature review before writing the introduction

- Start writing the introduction by using your own words, then add references from the literature

How to prepare the Abstract of a research paper

The abstract acts as your paper’s elevator pitch and is therefore best written only after the main text is finished. In this one short paragraph you must convince someone to take on the time-consuming task of reading your whole research article. So, make the paper easy to read, intriguing, and self-explanatory; avoid jargon and abbreviations.

How to structure the abstract of a research paper:

- The abstract is a single paragraph that follows this structure:

- Problem: why did we research this

- Methodology: typically starts with the words “Here we…” that signal the start of own contribution.

- Results: what we found from the research.

- Conclusions: show why are the findings important

How to compose a research paper Title

The title is the ultimate summary of a research paper. It must therefore entice someone looking for information to click on a link to it and continue reading the article. A title is also used for indexing purposes in scientific databases, so a representative and optimized title will play large role in determining if your research paper appears in search results at all.

Tips for coming up with a research paper title:

- Capture curiosity of potential readers using a clear and descriptive title

- Include broad terms that are often searched

- Add details that uniquely identify the researched subject of your research paper

- Avoid jargon and abbreviations

- Use keywords as title extension (instead of duplicating the words) to increase the chance of appearing in search results

How to prepare Highlights and Graphical Abstract

Highlights are three to five short bullet-point style statements that convey the core findings of the research paper. Notice that the focus is on the findings, not on the process of getting there.

A graphical abstract placed next to the textual abstract visually summarizes the entire research paper in a single, easy-to-follow figure. I show how to create a graphical abstract in my book Research Data Visualization and Scientific Graphics.

Tips for preparing highlights and graphical abstract:

- In highlights show core findings of the research paper (instead of what you did in the study).

- In graphical abstract show take-home message or methodology of the research paper. Learn more about creating a graphical abstract in this article.

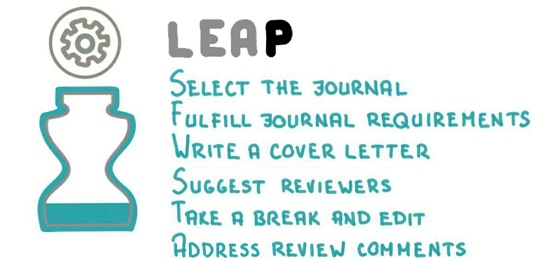

Step 4: Prepare for submission

Sometimes it seems that nuclear fusion will stop on the star closest to us (read: the sun will stop to shine) before a submitted manuscript is published in a scientific journal. The publication process routinely takes a long time, and after submitting the manuscript you have very little control over what happens. To increase the chances of a quick publication, you must do your homework before submitting the manuscript. In the fourth LEAP step, you make sure that your research paper is published in the most appropriate journal as quickly and painlessly as possible.

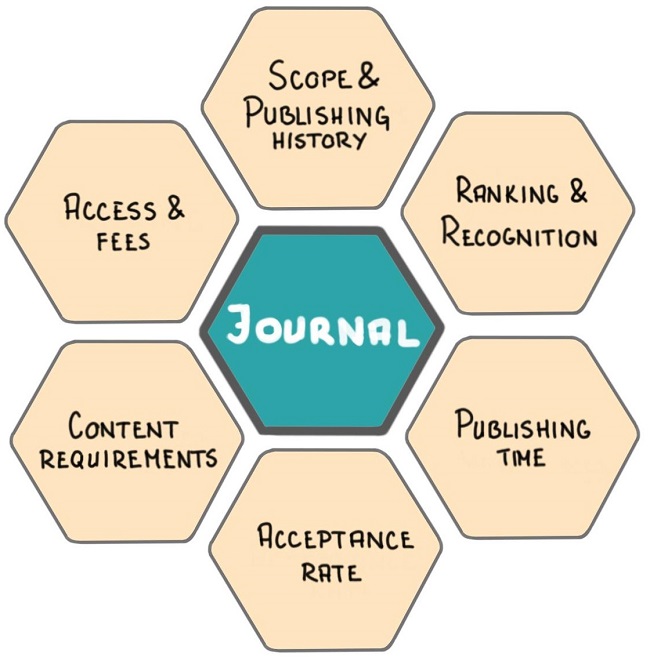

How to select a scientific Journal for your research paper

The best way to find a journal for your research paper is it to review which journals you used while preparing your manuscript. This source listing should provide some assurance that your own research paper, once published, will be among similar articles and, thus, among your field’s trusted sources.

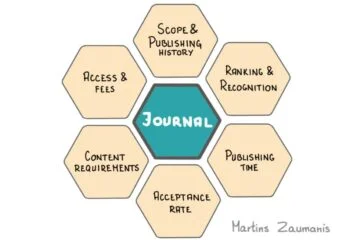

After this initial selection of hand-full of scientific journals, consider the following six parameters for selecting the most appropriate journal for your research paper (read this article to review each step in detail):

- Scope and publishing history

- Ranking and Recognition

- Publishing time

- Acceptance rate

- Content requirements

- Access and Fees

How to select a journal for your research paper:

- Use the six parameters to select the most appropriate scientific journal for your research paper

- Use the following tools for journal selection: https://peerrecognized.com/journals

- Follow the journal’s “Authors guide” formatting requirements

How to Edit you manuscript

No one can write a finished research paper on their first attempt. Before submitting, make sure to take a break from your work for a couple of days, or even weeks. Try not to think about the manuscript during this time. Once it has faded from your memory, it is time to return and edit. The pause will allow you to read the manuscript from a fresh perspective and make edits as necessary.

I have summarized the most useful research paper editing tools in this article.

Tips for editing a research paper:

- Take time away from the research paper to forget about it; then returning to edit,

- Start by editing the content: structure, headings, paragraphs, logic, figures

- Continue by editing the grammar and language; perform a thorough language check using academic writing tools

- Read the entire paper out loud and correct what sounds weird

How to write a compelling Cover Letter for your paper

Begin the cover letter by stating the paper’s title and the type of paper you are submitting (review paper, research paper, short communication). Next, concisely explain why your study was performed, what was done, and what the key findings are. State why the results are important and what impact they might have in the field. Make sure you mention how your approach and findings relate to the scope of the journal in order to show why the article would be of interest to the journal’s readers.

I wrote a separate article that explains what to include in a cover letter here. You can also download a cover letter template from the article.

Tips for writing a cover letter:

- Explain how the findings of your research relate to journal’s scope

- Tell what impact the research results will have

- Show why the research paper will interest the journal’s audience

- Add any legal statements as required in journal’s guide for authors

How to Answer the Reviewers

Reviewers will often ask for new experiments, extended discussion, additional details on the experimental setup, and so forth. In principle, your primary winning tactic will be to agree with the reviewers and follow their suggestions whenever possible. After all, you must earn their blessing in order to get your paper published.

Be sure to answer each review query and stick to the point. In the response to the reviewers document write exactly where in the paper you have made any changes. In the paper itself, highlight the changes using a different color. This way the reviewers are less likely to re-read the entire article and suggest new edits.

In cases when you don’t agree with the reviewers, it makes sense to answer more thoroughly. Reviewers are scientifically minded people and so, with enough logical and supported argument, they will eventually be willing to see things your way.

Tips for answering the reviewers:

- Agree with most review comments, but if you don’t, thoroughly explain why

- Highlight changes in the manuscript

- Do not take the comments personally and cool down before answering

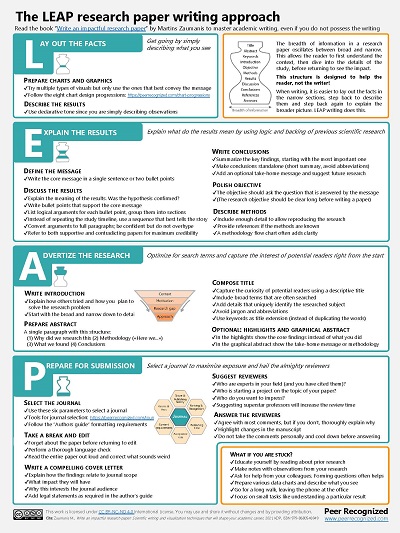

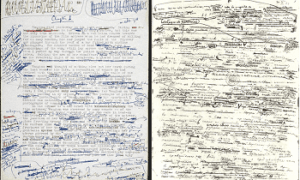

The LEAP research paper writing cheat sheet

Imagine that you are back in grad school and preparing to take an exam on the topic: “How to write a research paper”. As an exemplary student, you would, most naturally, create a cheat sheet summarizing the subject… Well, I did it for you.

This one-page summary of the LEAP research paper writing technique will remind you of the key research paper writing steps. Print it out and stick it to a wall in your office so that you can review it whenever you are writing a new research paper.

Now that we have gone through the four LEAP research paper writing steps, I hope you have a good idea of how to write a research paper. It can be an enjoyable process and once you get the hang of it, the four LEAP writing steps should even help you think about and interpret the research results. This process should enable you to write a well-structured, concise, and compelling research paper.

Have fund with writing your next research paper. I hope it will turn out great!

Learn writing papers that get cited

The LEAP writing approach is a blueprint for writing research papers. But to be efficient and write papers that get cited, you need more than that.

My name is Martins Zaumanis and in my interactive course Research Paper Writing Masterclass I will show you how to visualize your research results, frame a message that convinces your readers, and write each section of the paper. Step-by-step.

And of course – you will learn to respond the infamous Reviewer No.2.

Hey! My name is Martins Zaumanis and I am a materials scientist in Switzerland ( Google Scholar ). As the first person in my family with a PhD, I have first-hand experience of the challenges starting scientists face in academia. With this blog, I want to help young researchers succeed in academia. I call the blog “Peer Recognized”, because peer recognition is what lifts academic careers and pushes science forward.

Besides this blog, I have written the Peer Recognized book series and created the Peer Recognized Academy offering interactive online courses.

Related articles:

One comment

- Pingback: Research Paper Outline with Key Sentence Skeleton (+Paper Template)

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

I want to join the Peer Recognized newsletter!

This site uses Akismet to reduce spam. Learn how your comment data is processed .

Privacy Overview

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |

Copyright © 2024 Martins Zaumanis

Contacts: [email protected]

Privacy Policy

- Privacy Policy

Home » Research Paper – Structure, Examples and Writing Guide

Research Paper – Structure, Examples and Writing Guide

Table of Contents

Research Paper

Definition:

Research Paper is a written document that presents the author’s original research, analysis, and interpretation of a specific topic or issue.

It is typically based on Empirical Evidence, and may involve qualitative or quantitative research methods, or a combination of both. The purpose of a research paper is to contribute new knowledge or insights to a particular field of study, and to demonstrate the author’s understanding of the existing literature and theories related to the topic.

Structure of Research Paper

The structure of a research paper typically follows a standard format, consisting of several sections that convey specific information about the research study. The following is a detailed explanation of the structure of a research paper:

The title page contains the title of the paper, the name(s) of the author(s), and the affiliation(s) of the author(s). It also includes the date of submission and possibly, the name of the journal or conference where the paper is to be published.

The abstract is a brief summary of the research paper, typically ranging from 100 to 250 words. It should include the research question, the methods used, the key findings, and the implications of the results. The abstract should be written in a concise and clear manner to allow readers to quickly grasp the essence of the research.

Introduction

The introduction section of a research paper provides background information about the research problem, the research question, and the research objectives. It also outlines the significance of the research, the research gap that it aims to fill, and the approach taken to address the research question. Finally, the introduction section ends with a clear statement of the research hypothesis or research question.

Literature Review

The literature review section of a research paper provides an overview of the existing literature on the topic of study. It includes a critical analysis and synthesis of the literature, highlighting the key concepts, themes, and debates. The literature review should also demonstrate the research gap and how the current study seeks to address it.

The methods section of a research paper describes the research design, the sample selection, the data collection and analysis procedures, and the statistical methods used to analyze the data. This section should provide sufficient detail for other researchers to replicate the study.

The results section presents the findings of the research, using tables, graphs, and figures to illustrate the data. The findings should be presented in a clear and concise manner, with reference to the research question and hypothesis.

The discussion section of a research paper interprets the findings and discusses their implications for the research question, the literature review, and the field of study. It should also address the limitations of the study and suggest future research directions.

The conclusion section summarizes the main findings of the study, restates the research question and hypothesis, and provides a final reflection on the significance of the research.

The references section provides a list of all the sources cited in the paper, following a specific citation style such as APA, MLA or Chicago.

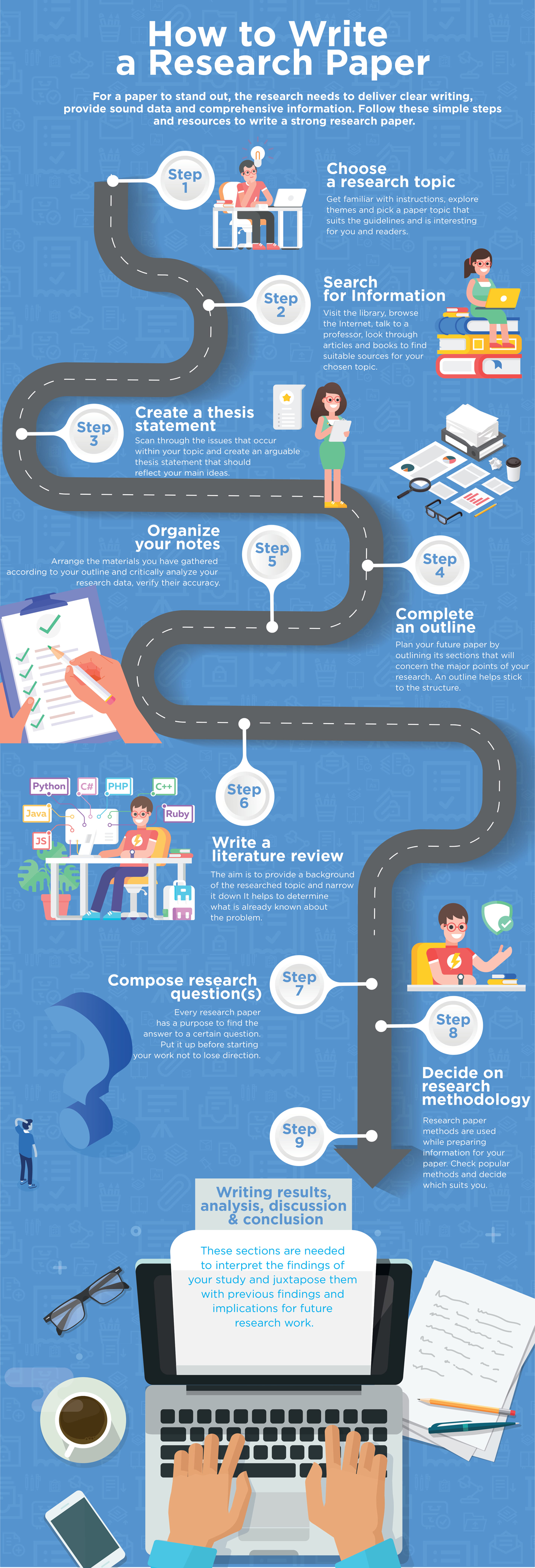

How to Write Research Paper

You can write Research Paper by the following guide:

- Choose a Topic: The first step is to select a topic that interests you and is relevant to your field of study. Brainstorm ideas and narrow down to a research question that is specific and researchable.

- Conduct a Literature Review: The literature review helps you identify the gap in the existing research and provides a basis for your research question. It also helps you to develop a theoretical framework and research hypothesis.

- Develop a Thesis Statement : The thesis statement is the main argument of your research paper. It should be clear, concise and specific to your research question.

- Plan your Research: Develop a research plan that outlines the methods, data sources, and data analysis procedures. This will help you to collect and analyze data effectively.

- Collect and Analyze Data: Collect data using various methods such as surveys, interviews, observations, or experiments. Analyze data using statistical tools or other qualitative methods.

- Organize your Paper : Organize your paper into sections such as Introduction, Literature Review, Methods, Results, Discussion, and Conclusion. Ensure that each section is coherent and follows a logical flow.

- Write your Paper : Start by writing the introduction, followed by the literature review, methods, results, discussion, and conclusion. Ensure that your writing is clear, concise, and follows the required formatting and citation styles.

- Edit and Proofread your Paper: Review your paper for grammar and spelling errors, and ensure that it is well-structured and easy to read. Ask someone else to review your paper to get feedback and suggestions for improvement.

- Cite your Sources: Ensure that you properly cite all sources used in your research paper. This is essential for giving credit to the original authors and avoiding plagiarism.

Research Paper Example

Note : The below example research paper is for illustrative purposes only and is not an actual research paper. Actual research papers may have different structures, contents, and formats depending on the field of study, research question, data collection and analysis methods, and other factors. Students should always consult with their professors or supervisors for specific guidelines and expectations for their research papers.

Research Paper Example sample for Students:

Title: The Impact of Social Media on Mental Health among Young Adults

Abstract: This study aims to investigate the impact of social media use on the mental health of young adults. A literature review was conducted to examine the existing research on the topic. A survey was then administered to 200 university students to collect data on their social media use, mental health status, and perceived impact of social media on their mental health. The results showed that social media use is positively associated with depression, anxiety, and stress. The study also found that social comparison, cyberbullying, and FOMO (Fear of Missing Out) are significant predictors of mental health problems among young adults.

Introduction: Social media has become an integral part of modern life, particularly among young adults. While social media has many benefits, including increased communication and social connectivity, it has also been associated with negative outcomes, such as addiction, cyberbullying, and mental health problems. This study aims to investigate the impact of social media use on the mental health of young adults.

Literature Review: The literature review highlights the existing research on the impact of social media use on mental health. The review shows that social media use is associated with depression, anxiety, stress, and other mental health problems. The review also identifies the factors that contribute to the negative impact of social media, including social comparison, cyberbullying, and FOMO.

Methods : A survey was administered to 200 university students to collect data on their social media use, mental health status, and perceived impact of social media on their mental health. The survey included questions on social media use, mental health status (measured using the DASS-21), and perceived impact of social media on their mental health. Data were analyzed using descriptive statistics and regression analysis.

Results : The results showed that social media use is positively associated with depression, anxiety, and stress. The study also found that social comparison, cyberbullying, and FOMO are significant predictors of mental health problems among young adults.

Discussion : The study’s findings suggest that social media use has a negative impact on the mental health of young adults. The study highlights the need for interventions that address the factors contributing to the negative impact of social media, such as social comparison, cyberbullying, and FOMO.

Conclusion : In conclusion, social media use has a significant impact on the mental health of young adults. The study’s findings underscore the need for interventions that promote healthy social media use and address the negative outcomes associated with social media use. Future research can explore the effectiveness of interventions aimed at reducing the negative impact of social media on mental health. Additionally, longitudinal studies can investigate the long-term effects of social media use on mental health.

Limitations : The study has some limitations, including the use of self-report measures and a cross-sectional design. The use of self-report measures may result in biased responses, and a cross-sectional design limits the ability to establish causality.

Implications: The study’s findings have implications for mental health professionals, educators, and policymakers. Mental health professionals can use the findings to develop interventions that address the negative impact of social media use on mental health. Educators can incorporate social media literacy into their curriculum to promote healthy social media use among young adults. Policymakers can use the findings to develop policies that protect young adults from the negative outcomes associated with social media use.

References :

- Twenge, J. M., & Campbell, W. K. (2019). Associations between screen time and lower psychological well-being among children and adolescents: Evidence from a population-based study. Preventive medicine reports, 15, 100918.

- Primack, B. A., Shensa, A., Escobar-Viera, C. G., Barrett, E. L., Sidani, J. E., Colditz, J. B., … & James, A. E. (2017). Use of multiple social media platforms and symptoms of depression and anxiety: A nationally-representative study among US young adults. Computers in Human Behavior, 69, 1-9.

- Van der Meer, T. G., & Verhoeven, J. W. (2017). Social media and its impact on academic performance of students. Journal of Information Technology Education: Research, 16, 383-398.

Appendix : The survey used in this study is provided below.

Social Media and Mental Health Survey

- How often do you use social media per day?

- Less than 30 minutes

- 30 minutes to 1 hour

- 1 to 2 hours

- 2 to 4 hours

- More than 4 hours

- Which social media platforms do you use?

- Others (Please specify)

- How often do you experience the following on social media?

- Social comparison (comparing yourself to others)

- Cyberbullying

- Fear of Missing Out (FOMO)

- Have you ever experienced any of the following mental health problems in the past month?

- Do you think social media use has a positive or negative impact on your mental health?

- Very positive

- Somewhat positive

- Somewhat negative

- Very negative

- In your opinion, which factors contribute to the negative impact of social media on mental health?

- Social comparison

- In your opinion, what interventions could be effective in reducing the negative impact of social media on mental health?

- Education on healthy social media use

- Counseling for mental health problems caused by social media

- Social media detox programs

- Regulation of social media use

Thank you for your participation!

Applications of Research Paper

Research papers have several applications in various fields, including:

- Advancing knowledge: Research papers contribute to the advancement of knowledge by generating new insights, theories, and findings that can inform future research and practice. They help to answer important questions, clarify existing knowledge, and identify areas that require further investigation.

- Informing policy: Research papers can inform policy decisions by providing evidence-based recommendations for policymakers. They can help to identify gaps in current policies, evaluate the effectiveness of interventions, and inform the development of new policies and regulations.

- Improving practice: Research papers can improve practice by providing evidence-based guidance for professionals in various fields, including medicine, education, business, and psychology. They can inform the development of best practices, guidelines, and standards of care that can improve outcomes for individuals and organizations.

- Educating students : Research papers are often used as teaching tools in universities and colleges to educate students about research methods, data analysis, and academic writing. They help students to develop critical thinking skills, research skills, and communication skills that are essential for success in many careers.

- Fostering collaboration: Research papers can foster collaboration among researchers, practitioners, and policymakers by providing a platform for sharing knowledge and ideas. They can facilitate interdisciplinary collaborations and partnerships that can lead to innovative solutions to complex problems.

When to Write Research Paper

Research papers are typically written when a person has completed a research project or when they have conducted a study and have obtained data or findings that they want to share with the academic or professional community. Research papers are usually written in academic settings, such as universities, but they can also be written in professional settings, such as research organizations, government agencies, or private companies.

Here are some common situations where a person might need to write a research paper:

- For academic purposes: Students in universities and colleges are often required to write research papers as part of their coursework, particularly in the social sciences, natural sciences, and humanities. Writing research papers helps students to develop research skills, critical thinking skills, and academic writing skills.

- For publication: Researchers often write research papers to publish their findings in academic journals or to present their work at academic conferences. Publishing research papers is an important way to disseminate research findings to the academic community and to establish oneself as an expert in a particular field.

- To inform policy or practice : Researchers may write research papers to inform policy decisions or to improve practice in various fields. Research findings can be used to inform the development of policies, guidelines, and best practices that can improve outcomes for individuals and organizations.

- To share new insights or ideas: Researchers may write research papers to share new insights or ideas with the academic or professional community. They may present new theories, propose new research methods, or challenge existing paradigms in their field.

Purpose of Research Paper

The purpose of a research paper is to present the results of a study or investigation in a clear, concise, and structured manner. Research papers are written to communicate new knowledge, ideas, or findings to a specific audience, such as researchers, scholars, practitioners, or policymakers. The primary purposes of a research paper are:

- To contribute to the body of knowledge : Research papers aim to add new knowledge or insights to a particular field or discipline. They do this by reporting the results of empirical studies, reviewing and synthesizing existing literature, proposing new theories, or providing new perspectives on a topic.

- To inform or persuade: Research papers are written to inform or persuade the reader about a particular issue, topic, or phenomenon. They present evidence and arguments to support their claims and seek to persuade the reader of the validity of their findings or recommendations.

- To advance the field: Research papers seek to advance the field or discipline by identifying gaps in knowledge, proposing new research questions or approaches, or challenging existing assumptions or paradigms. They aim to contribute to ongoing debates and discussions within a field and to stimulate further research and inquiry.

- To demonstrate research skills: Research papers demonstrate the author’s research skills, including their ability to design and conduct a study, collect and analyze data, and interpret and communicate findings. They also demonstrate the author’s ability to critically evaluate existing literature, synthesize information from multiple sources, and write in a clear and structured manner.

Characteristics of Research Paper

Research papers have several characteristics that distinguish them from other forms of academic or professional writing. Here are some common characteristics of research papers:

- Evidence-based: Research papers are based on empirical evidence, which is collected through rigorous research methods such as experiments, surveys, observations, or interviews. They rely on objective data and facts to support their claims and conclusions.

- Structured and organized: Research papers have a clear and logical structure, with sections such as introduction, literature review, methods, results, discussion, and conclusion. They are organized in a way that helps the reader to follow the argument and understand the findings.

- Formal and objective: Research papers are written in a formal and objective tone, with an emphasis on clarity, precision, and accuracy. They avoid subjective language or personal opinions and instead rely on objective data and analysis to support their arguments.

- Citations and references: Research papers include citations and references to acknowledge the sources of information and ideas used in the paper. They use a specific citation style, such as APA, MLA, or Chicago, to ensure consistency and accuracy.

- Peer-reviewed: Research papers are often peer-reviewed, which means they are evaluated by other experts in the field before they are published. Peer-review ensures that the research is of high quality, meets ethical standards, and contributes to the advancement of knowledge in the field.

- Objective and unbiased: Research papers strive to be objective and unbiased in their presentation of the findings. They avoid personal biases or preconceptions and instead rely on the data and analysis to draw conclusions.

Advantages of Research Paper

Research papers have many advantages, both for the individual researcher and for the broader academic and professional community. Here are some advantages of research papers:

- Contribution to knowledge: Research papers contribute to the body of knowledge in a particular field or discipline. They add new information, insights, and perspectives to existing literature and help advance the understanding of a particular phenomenon or issue.

- Opportunity for intellectual growth: Research papers provide an opportunity for intellectual growth for the researcher. They require critical thinking, problem-solving, and creativity, which can help develop the researcher’s skills and knowledge.

- Career advancement: Research papers can help advance the researcher’s career by demonstrating their expertise and contributions to the field. They can also lead to new research opportunities, collaborations, and funding.

- Academic recognition: Research papers can lead to academic recognition in the form of awards, grants, or invitations to speak at conferences or events. They can also contribute to the researcher’s reputation and standing in the field.

- Impact on policy and practice: Research papers can have a significant impact on policy and practice. They can inform policy decisions, guide practice, and lead to changes in laws, regulations, or procedures.

- Advancement of society: Research papers can contribute to the advancement of society by addressing important issues, identifying solutions to problems, and promoting social justice and equality.

Limitations of Research Paper

Research papers also have some limitations that should be considered when interpreting their findings or implications. Here are some common limitations of research papers:

- Limited generalizability: Research findings may not be generalizable to other populations, settings, or contexts. Studies often use specific samples or conditions that may not reflect the broader population or real-world situations.

- Potential for bias : Research papers may be biased due to factors such as sample selection, measurement errors, or researcher biases. It is important to evaluate the quality of the research design and methods used to ensure that the findings are valid and reliable.

- Ethical concerns: Research papers may raise ethical concerns, such as the use of vulnerable populations or invasive procedures. Researchers must adhere to ethical guidelines and obtain informed consent from participants to ensure that the research is conducted in a responsible and respectful manner.

- Limitations of methodology: Research papers may be limited by the methodology used to collect and analyze data. For example, certain research methods may not capture the complexity or nuance of a particular phenomenon, or may not be appropriate for certain research questions.

- Publication bias: Research papers may be subject to publication bias, where positive or significant findings are more likely to be published than negative or non-significant findings. This can skew the overall findings of a particular area of research.

- Time and resource constraints: Research papers may be limited by time and resource constraints, which can affect the quality and scope of the research. Researchers may not have access to certain data or resources, or may be unable to conduct long-term studies due to practical limitations.

About the author

Muhammad Hassan

Researcher, Academic Writer, Web developer

You may also like

Ethical Considerations – Types, Examples and...

Implications in Research – Types, Examples and...

Chapter Summary & Overview – Writing Guide...

Scope of the Research – Writing Guide and...

Background of The Study – Examples and Writing...

Institutional Review Board – Application Sample...

Instant insights, infinite possibilities

- How to write a research paper

Last updated

11 January 2024

Reviewed by

With proper planning, knowledge, and framework, completing a research paper can be a fulfilling and exciting experience.

Though it might initially sound slightly intimidating, this guide will help you embrace the challenge.

By documenting your findings, you can inspire others and make a difference in your field. Here's how you can make your research paper unique and comprehensive.

- What is a research paper?

Research papers allow you to demonstrate your knowledge and understanding of a particular topic. These papers are usually lengthier and more detailed than typical essays, requiring deeper insight into the chosen topic.

To write a research paper, you must first choose a topic that interests you and is relevant to the field of study. Once you’ve selected your topic, gathering as many relevant resources as possible, including books, scholarly articles, credible websites, and other academic materials, is essential. You must then read and analyze these sources, summarizing their key points and identifying gaps in the current research.

You can formulate your ideas and opinions once you thoroughly understand the existing research. To get there might involve conducting original research, gathering data, or analyzing existing data sets. It could also involve presenting an original argument or interpretation of the existing research.

Writing a successful research paper involves presenting your findings clearly and engagingly, which might involve using charts, graphs, or other visual aids to present your data and using concise language to explain your findings. You must also ensure your paper adheres to relevant academic formatting guidelines, including proper citations and references.

Overall, writing a research paper requires a significant amount of time, effort, and attention to detail. However, it is also an enriching experience that allows you to delve deeply into a subject that interests you and contribute to the existing body of knowledge in your chosen field.

- How long should a research paper be?

Research papers are deep dives into a topic. Therefore, they tend to be longer pieces of work than essays or opinion pieces.

However, a suitable length depends on the complexity of the topic and your level of expertise. For instance, are you a first-year college student or an experienced professional?

Also, remember that the best research papers provide valuable information for the benefit of others. Therefore, the quality of information matters most, not necessarily the length. Being concise is valuable.

Following these best practice steps will help keep your process simple and productive:

1. Gaining a deep understanding of any expectations

Before diving into your intended topic or beginning the research phase, take some time to orient yourself. Suppose there’s a specific topic assigned to you. In that case, it’s essential to deeply understand the question and organize your planning and approach in response. Pay attention to the key requirements and ensure you align your writing accordingly.

This preparation step entails

Deeply understanding the task or assignment

Being clear about the expected format and length

Familiarizing yourself with the citation and referencing requirements

Understanding any defined limits for your research contribution

Where applicable, speaking to your professor or research supervisor for further clarification

2. Choose your research topic

Select a research topic that aligns with both your interests and available resources. Ideally, focus on a field where you possess significant experience and analytical skills. In crafting your research paper, it's crucial to go beyond summarizing existing data and contribute fresh insights to the chosen area.

Consider narrowing your focus to a specific aspect of the topic. For example, if exploring the link between technology and mental health, delve into how social media use during the pandemic impacts the well-being of college students. Conducting interviews and surveys with students could provide firsthand data and unique perspectives, adding substantial value to the existing knowledge.

When finalizing your topic, adhere to legal and ethical norms in the relevant area (this ensures the integrity of your research, protects participants' rights, upholds intellectual property standards, and ensures transparency and accountability). Following these principles not only maintains the credibility of your work but also builds trust within your academic or professional community.

For instance, in writing about medical research, consider legal and ethical norms , including patient confidentiality laws and informed consent requirements. Similarly, if analyzing user data on social media platforms, be mindful of data privacy regulations, ensuring compliance with laws governing personal information collection and use. Aligning with legal and ethical standards not only avoids potential issues but also underscores the responsible conduct of your research.

3. Gather preliminary research

Once you’ve landed on your topic, it’s time to explore it further. You’ll want to discover more about available resources and existing research relevant to your assignment at this stage.

This exploratory phase is vital as you may discover issues with your original idea or realize you have insufficient resources to explore the topic effectively. This key bit of groundwork allows you to redirect your research topic in a different, more feasible, or more relevant direction if necessary.

Spending ample time at this stage ensures you gather everything you need, learn as much as you can about the topic, and discover gaps where the topic has yet to be sufficiently covered, offering an opportunity to research it further.

4. Define your research question

To produce a well-structured and focused paper, it is imperative to formulate a clear and precise research question that will guide your work. Your research question must be informed by the existing literature and tailored to the scope and objectives of your project. By refining your focus, you can produce a thoughtful and engaging paper that effectively communicates your ideas to your readers.

5. Write a thesis statement

A thesis statement is a one-to-two-sentence summary of your research paper's main argument or direction. It serves as an overall guide to summarize the overall intent of the research paper for you and anyone wanting to know more about the research.

A strong thesis statement is:

Concise and clear: Explain your case in simple sentences (avoid covering multiple ideas). It might help to think of this section as an elevator pitch.

Specific: Ensure that there is no ambiguity in your statement and that your summary covers the points argued in the paper.

Debatable: A thesis statement puts forward a specific argument––it is not merely a statement but a debatable point that can be analyzed and discussed.

Here are three thesis statement examples from different disciplines:

Psychology thesis example: "We're studying adults aged 25-40 to see if taking short breaks for mindfulness can help with stress. Our goal is to find practical ways to manage anxiety better."

Environmental science thesis example: "This research paper looks into how having more city parks might make the air cleaner and keep people healthier. I want to find out if more green spaces means breathing fewer carcinogens in big cities."

UX research thesis example: "This study focuses on improving mobile banking for older adults using ethnographic research, eye-tracking analysis, and interactive prototyping. We investigate the usefulness of eye-tracking analysis with older individuals, aiming to spark debate and offer fresh perspectives on UX design and digital inclusivity for the aging population."

6. Conduct in-depth research

A research paper doesn’t just include research that you’ve uncovered from other papers and studies but your fresh insights, too. You will seek to become an expert on your topic––understanding the nuances in the current leading theories. You will analyze existing research and add your thinking and discoveries. It's crucial to conduct well-designed research that is rigorous, robust, and based on reliable sources. Suppose a research paper lacks evidence or is biased. In that case, it won't benefit the academic community or the general public. Therefore, examining the topic thoroughly and furthering its understanding through high-quality research is essential. That usually means conducting new research. Depending on the area under investigation, you may conduct surveys, interviews, diary studies , or observational research to uncover new insights or bolster current claims.

7. Determine supporting evidence

Not every piece of research you’ve discovered will be relevant to your research paper. It’s important to categorize the most meaningful evidence to include alongside your discoveries. It's important to include evidence that doesn't support your claims to avoid exclusion bias and ensure a fair research paper.

8. Write a research paper outline

Before diving in and writing the whole paper, start with an outline. It will help you to see if more research is needed, and it will provide a framework by which to write a more compelling paper. Your supervisor may even request an outline to approve before beginning to write the first draft of the full paper. An outline will include your topic, thesis statement, key headings, short summaries of the research, and your arguments.

9. Write your first draft

Once you feel confident about your outline and sources, it’s time to write your first draft. While penning a long piece of content can be intimidating, if you’ve laid the groundwork, you will have a structure to help you move steadily through each section. To keep up motivation and inspiration, it’s often best to keep the pace quick. Stopping for long periods can interrupt your flow and make jumping back in harder than writing when things are fresh in your mind.

10. Cite your sources correctly

It's always a good practice to give credit where it's due, and the same goes for citing any works that have influenced your paper. Building your arguments on credible references adds value and authenticity to your research. In the formatting guidelines section, you’ll find an overview of different citation styles (MLA, CMOS, or APA), which will help you meet any publishing or academic requirements and strengthen your paper's credibility. It is essential to follow the guidelines provided by your school or the publication you are submitting to ensure the accuracy and relevance of your citations.

11. Ensure your work is original

It is crucial to ensure the originality of your paper, as plagiarism can lead to serious consequences. To avoid plagiarism, you should use proper paraphrasing and quoting techniques. Paraphrasing is rewriting a text in your own words while maintaining the original meaning. Quoting involves directly citing the source. Giving credit to the original author or source is essential whenever you borrow their ideas or words. You can also use plagiarism detection tools such as Scribbr or Grammarly to check the originality of your paper. These tools compare your draft writing to a vast database of online sources. If you find any accidental plagiarism, you should correct it immediately by rephrasing or citing the source.

12. Revise, edit, and proofread

One of the essential qualities of excellent writers is their ability to understand the importance of editing and proofreading. Even though it's tempting to call it a day once you've finished your writing, editing your work can significantly improve its quality. It's natural to overlook the weaker areas when you've just finished writing a paper. Therefore, it's best to take a break of a day or two, or even up to a week, to refresh your mind. This way, you can return to your work with a new perspective. After some breathing room, you can spot any inconsistencies, spelling and grammar errors, typos, or missing citations and correct them.

- The best research paper format

The format of your research paper should align with the requirements set forth by your college, school, or target publication.

There is no one “best” format, per se. Depending on the stated requirements, you may need to include the following elements:

Title page: The title page of a research paper typically includes the title, author's name, and institutional affiliation and may include additional information such as a course name or instructor's name.

Table of contents: Include a table of contents to make it easy for readers to find specific sections of your paper.

Abstract: The abstract is a summary of the purpose of the paper.

Methods : In this section, describe the research methods used. This may include collecting data , conducting interviews, or doing field research .

Results: Summarize the conclusions you drew from your research in this section.

Discussion: In this section, discuss the implications of your research . Be sure to mention any significant limitations to your approach and suggest areas for further research.

Tables, charts, and illustrations: Use tables, charts, and illustrations to help convey your research findings and make them easier to understand.

Works cited or reference page: Include a works cited or reference page to give credit to the sources that you used to conduct your research.

Bibliography: Provide a list of all the sources you consulted while conducting your research.

Dedication and acknowledgments : Optionally, you may include a dedication and acknowledgments section to thank individuals who helped you with your research.

- General style and formatting guidelines

Formatting your research paper means you can submit it to your college, journal, or other publications in compliance with their criteria.

Research papers tend to follow the American Psychological Association (APA), Modern Language Association (MLA), or Chicago Manual of Style (CMOS) guidelines.

Here’s how each style guide is typically used:

Chicago Manual of Style (CMOS):

CMOS is a versatile style guide used for various types of writing. It's known for its flexibility and use in the humanities. CMOS provides guidelines for citations, formatting, and overall writing style. It allows for both footnotes and in-text citations, giving writers options based on their preferences or publication requirements.

American Psychological Association (APA):

APA is common in the social sciences. It’s hailed for its clarity and emphasis on precision. It has specific rules for citing sources, creating references, and formatting papers. APA style uses in-text citations with an accompanying reference list. It's designed to convey information efficiently and is widely used in academic and scientific writing.

Modern Language Association (MLA):

MLA is widely used in the humanities, especially literature and language studies. It emphasizes the author-page format for in-text citations and provides guidelines for creating a "Works Cited" page. MLA is known for its focus on the author's name and the literary works cited. It’s frequently used in disciplines that prioritize literary analysis and critical thinking.

To confirm you're using the latest style guide, check the official website or publisher's site for updates, consult academic resources, and verify the guide's publication date. Online platforms and educational resources may also provide summaries and alerts about any revisions or additions to the style guide.

Citing sources

When working on your research paper, it's important to cite the sources you used properly. Your citation style will guide you through this process. Generally, there are three parts to citing sources in your research paper:

First, provide a brief citation in the body of your essay. This is also known as a parenthetical or in-text citation.

Second, include a full citation in the Reference list at the end of your paper. Different types of citations include in-text citations, footnotes, and reference lists.

In-text citations include the author's surname and the date of the citation.

Footnotes appear at the bottom of each page of your research paper. They may also be summarized within a reference list at the end of the paper.

A reference list includes all of the research used within the paper at the end of the document. It should include the author, date, paper title, and publisher listed in the order that aligns with your citation style.

10 research paper writing tips:

Following some best practices is essential to writing a research paper that contributes to your field of study and creates a positive impact.

These tactics will help you structure your argument effectively and ensure your work benefits others:

Clear and precise language: Ensure your language is unambiguous. Use academic language appropriately, but keep it simple. Also, provide clear takeaways for your audience.

Effective idea separation: Organize the vast amount of information and sources in your paper with paragraphs and titles. Create easily digestible sections for your readers to navigate through.

Compelling intro: Craft an engaging introduction that captures your reader's interest. Hook your audience and motivate them to continue reading.

Thorough revision and editing: Take the time to review and edit your paper comprehensively. Use tools like Grammarly to detect and correct small, overlooked errors.

Thesis precision: Develop a clear and concise thesis statement that guides your paper. Ensure that your thesis aligns with your research's overall purpose and contribution.

Logical flow of ideas: Maintain a logical progression throughout the paper. Use transitions effectively to connect different sections and maintain coherence.

Critical evaluation of sources: Evaluate and critically assess the relevance and reliability of your sources. Ensure that your research is based on credible and up-to-date information.

Thematic consistency: Maintain a consistent theme throughout the paper. Ensure that all sections contribute cohesively to the overall argument.

Relevant supporting evidence: Provide concise and relevant evidence to support your arguments. Avoid unnecessary details that may distract from the main points.

Embrace counterarguments: Acknowledge and address opposing views to strengthen your position. Show that you have considered alternative arguments in your field.

7 research tips

If you want your paper to not only be well-written but also contribute to the progress of human knowledge, consider these tips to take your paper to the next level:

Selecting the appropriate topic: The topic you select should align with your area of expertise, comply with the requirements of your project, and have sufficient resources for a comprehensive investigation.

Use academic databases: Academic databases such as PubMed, Google Scholar, and JSTOR offer a wealth of research papers that can help you discover everything you need to know about your chosen topic.

Critically evaluate sources: It is important not to accept research findings at face value. Instead, it is crucial to critically analyze the information to avoid jumping to conclusions or overlooking important details. A well-written research paper requires a critical analysis with thorough reasoning to support claims.

Diversify your sources: Expand your research horizons by exploring a variety of sources beyond the standard databases. Utilize books, conference proceedings, and interviews to gather diverse perspectives and enrich your understanding of the topic.

Take detailed notes: Detailed note-taking is crucial during research and can help you form the outline and body of your paper.

Stay up on trends: Keep abreast of the latest developments in your field by regularly checking for recent publications. Subscribe to newsletters, follow relevant journals, and attend conferences to stay informed about emerging trends and advancements.

Engage in peer review: Seek feedback from peers or mentors to ensure the rigor and validity of your research . Peer review helps identify potential weaknesses in your methodology and strengthens the overall credibility of your findings.

- The real-world impact of research papers

Writing a research paper is more than an academic or business exercise. The experience provides an opportunity to explore a subject in-depth, broaden one's understanding, and arrive at meaningful conclusions. With careful planning, dedication, and hard work, writing a research paper can be a fulfilling and enriching experience contributing to advancing knowledge.

How do I publish my research paper?

Many academics wish to publish their research papers. While challenging, your paper might get traction if it covers new and well-written information. To publish your research paper, find a target publication, thoroughly read their guidelines, format your paper accordingly, and send it to them per their instructions. You may need to include a cover letter, too. After submission, your paper may be peer-reviewed by experts to assess its legitimacy, quality, originality, and methodology. Following review, you will be informed by the publication whether they have accepted or rejected your paper.

What is a good opening sentence for a research paper?

Beginning your research paper with a compelling introduction can ensure readers are interested in going further. A relevant quote, a compelling statistic, or a bold argument can start the paper and hook your reader. Remember, though, that the most important aspect of a research paper is the quality of the information––not necessarily your ability to storytell, so ensure anything you write aligns with your goals.

Research paper vs. a research proposal—what’s the difference?

While some may confuse research papers and proposals, they are different documents.

A research proposal comes before a research paper. It is a detailed document that outlines an intended area of exploration. It includes the research topic, methodology, timeline, sources, and potential conclusions. Research proposals are often required when seeking approval to conduct research.

A research paper is a summary of research findings. A research paper follows a structured format to present those findings and construct an argument or conclusion.

Should you be using a customer insights hub?

Do you want to discover previous research faster?

Do you share your research findings with others?

Do you analyze research data?

Start for free today, add your research, and get to key insights faster

Editor’s picks

Last updated: 18 April 2023

Last updated: 27 February 2023

Last updated: 22 August 2024

Last updated: 5 February 2023

Last updated: 16 April 2023

Last updated: 9 March 2023

Last updated: 30 April 2024

Last updated: 12 December 2023

Last updated: 11 March 2024

Last updated: 4 July 2024

Last updated: 6 March 2024

Last updated: 5 March 2024

Last updated: 13 May 2024

Latest articles

Related topics, .css-je19u9{-webkit-align-items:flex-end;-webkit-box-align:flex-end;-ms-flex-align:flex-end;align-items:flex-end;display:-webkit-box;display:-webkit-flex;display:-ms-flexbox;display:flex;-webkit-flex-direction:row;-ms-flex-direction:row;flex-direction:row;-webkit-box-flex-wrap:wrap;-webkit-flex-wrap:wrap;-ms-flex-wrap:wrap;flex-wrap:wrap;-webkit-box-pack:center;-ms-flex-pack:center;-webkit-justify-content:center;justify-content:center;row-gap:0;text-align:center;max-width:671px;}@media (max-width: 1079px){.css-je19u9{max-width:400px;}.css-je19u9>span{white-space:pre;}}@media (max-width: 799px){.css-je19u9{max-width:400px;}.css-je19u9>span{white-space:pre;}} decide what to .css-1kiodld{max-height:56px;display:-webkit-box;display:-webkit-flex;display:-ms-flexbox;display:flex;-webkit-align-items:center;-webkit-box-align:center;-ms-flex-align:center;align-items:center;}@media (max-width: 1079px){.css-1kiodld{display:none;}} build next, decide what to build next.

- 10 research paper

Log in or sign up

Get started for free

Research Methods: A Student's Comprehensive Guide: Structure

- Research Approaches

- Types of Sources

- Accessing Resources

- Evaluating Sources

- Question Crafting

- Search Strategies

- Annotated Bibliography

- Literature Reviews

- Citations This link opens in a new window

Research Paper

Welcome to the art of crafting a research paper! Think of this as your roadmap to creating a well-structured and impactful study. We’ll walk you through each crucial component—from introducing your topic with flair to wrapping up with a strong conclusion. Whether you're diving into your first research project or polishing your latest masterpiece, this guide is here to make the journey smoother and more enjoyable. Get ready to turn your research into a compelling narrative that not only showcases your findings but also captivates your readers.

- Paper Snapshot

Introduction

Methodology, research paper structure: a snapshot.

Before diving into the individual components, let's take a quick look at the full structure of a research paper. This snapshot will help you visualize how each section fits together to form a cohesive and well-organized paper.

- Introduce your topic and research question.

- Provide background and context to set up your study.

- Summarize relevant existing research.

- Highlight key studies, theories, and gaps in the literature.

- Describe your research design and methods.

- Explain your data collection and analysis processes.

- Present your findings clearly.

- Use visuals, like charts and tables, to enhance understanding.

- Analyze and interpret the results.

- Discuss the broader implications of your findings and acknowledge limitations.

- Recap your key findings.

- Suggest areas for future research and offer final reflections.

With this snapshot, you now have a high-level view of the main components of your research paper. You can explore each section in detail in the following tabs.

The introduction serves as your reader's first impression of your paper. It should draw them in with a compelling overview of your topic, clearly outline your research question or thesis, and establish the importance of your study.

Key Components

Opening Statement

- Start strong with an attention-grabbing hook: a striking fact, thought-provoking quote, or an interesting anecdote that relates to your research.

Background Information

- Provide necessary context to help readers understand the relevance and scope of your study. You can include key historical information, theoretical context, or a brief overview of previous research.

Research Question or Thesis Statement

- This is the heart of your introduction. State your research question or thesis in a clear, concise manner, so readers know exactly what you are investigating.

Scope and Objectives

- Clearly define the boundaries of your research. What will your paper cover, and what will it not address? This helps frame your work for readers.

Significance of the Study

- Explain why your research matters. Does it fill a gap in existing research? Is it practically useful? Emphasize the value and contribution your paper brings to the field.

Tips for Crafting a Strong Introduction

- Be Engaging: Your opening should grab attention and encourage the reader to keep going.

- Be Clear: Avoid ambiguity—clearly state your research question and purpose.

- Provide Context: Background information is essential to help the reader understand the topic, but avoid overwhelming them with too much detail at this stage.

- Stay Focused: Keep the introduction concise but informative, setting the tone for the rest of your paper.

Literature Review

The literature review is where you showcase the existing research that relates to your topic. It's your chance to demonstrate your understanding of the academic conversation and position your research within that context.

Summarizing Existing Research

- Review relevant studies, theories, and findings that directly relate to your research question. This provides a foundation for your paper and shows that your study is grounded in the existing body of work.

Highlighting Key Studies

- Identify the most influential or significant research in your field. These are the works that have shaped the current understanding of your topic, and they should be emphasized in your review.

Identifying Gaps or Controversies

- Point out areas where there is limited research, conflicting findings, or ongoing debates. These gaps or discrepancies provide justification for your own research.

Establishing Your Research’s Relevance

- Explain how your research contributes to the field. Whether you’re addressing a gap, building on existing studies, or proposing something new, clearly indicate how your work fits into the larger picture.

Tips for a Strong Literature Review

- Stay Focused: Only include studies that are directly relevant to your research question. Avoid summarizing every piece of literature you've read.

- Be Critical: Don’t just summarize—critically assess the strengths and weaknesses of the studies you include.

- Organize Effectively: Structure your review in a logical order, grouping studies by themes, methodologies, or findings.

- Show Connections: Discuss how different studies relate to one another and to your research. This helps build a coherent narrative.

The methodology section details how you conducted your research. This is where you explain your approach, so others can understand and potentially replicate your study.

Research Design

- Outline the overall design of your study. Are you using qualitative, quantitative, or mixed methods? Define the type of research you're conducting (e.g., case study, survey, experiment).

Data Collection

- Explain how you gathered your data. Were interviews conducted? Surveys distributed? Or perhaps you collected data through observation or archival research. Be specific about the tools, instruments, or platforms you used.

Participants and Sampling

- If applicable, describe your sample group. Who participated in your study? How were they selected? Include details like the size of your sample and any inclusion/exclusion criteria.

Data Analysis

- Discuss how you analyzed your data. Did you use statistical methods, thematic analysis, coding, or another technique? Make sure to explain why these methods were appropriate for your research question.

Ethical Considerations

- Briefly mention any ethical protocols you followed, such as obtaining consent from participants or ensuring anonymity. If your research involved sensitive topics, this is especially important to address.

Tips for Writing Your Methodology

- Be Detailed but Clear: Provide enough detail so your methods can be understood or replicated, but avoid overloading with unnecessary jargon.

- Justify Your Choices: Explain why you chose specific methods over others and how they align with your research objectives.

- Stay Organized: Break your methodology into clear sections to improve readability and flow.

Results Tab

In the results section, you present the findings of your research. This is where you report what you discovered, without interpretation (that comes in the Discussion section). Clarity is key, especially if you are using visuals to support your findings.

Presentation of Data

- Clearly present your research results. This can include numerical data, text analysis, or findings from experiments, surveys, or interviews.

Use of Visuals